27. February 2026 By Dr. Michael Peichl

No more tool clutter! How Lakeflow and Lakebase radically simplify data architecture.

The cost of fragmentation: Why consolidation is necessary

Troubleshooting in modern data landscapes is often inefficient: When a component fails, logs across various systems must be compared for analysis. This fragmentation is at the heart of many stability issues.

Architectures that have grown over many years often resemble a patchwork quilt: specialised tools such as Fivetran or Qlik for ingestion, Apache Kafka for streaming, dbt for transformation logic and Apache Airflow for orchestration must be kept in sync.

Every interface between these components generates metadata breaks and maintenance effort. Data engineers today invest a significant portion of their working time in maintaining these integrations (‘integration tax’) instead of developing value-adding data products.

The Databricks Data Intelligence Platform aims to eliminate this integration effort architecturally. In the following, we will look at how such a consolidated infrastructure is technically structured.

Lakeflow: Holistic data flows instead of isolated tools

Instead of operating a separate licence and infrastructure for each process step, Lakeflow views the data pipeline as an end-to-end process – from source to delivery.

Resilient ingestion (Lakeflow Connect)

Direct extraction from enterprise systems (such as SAP or Salesforce) poses risks for the source systems – especially in the event of failed transformations.

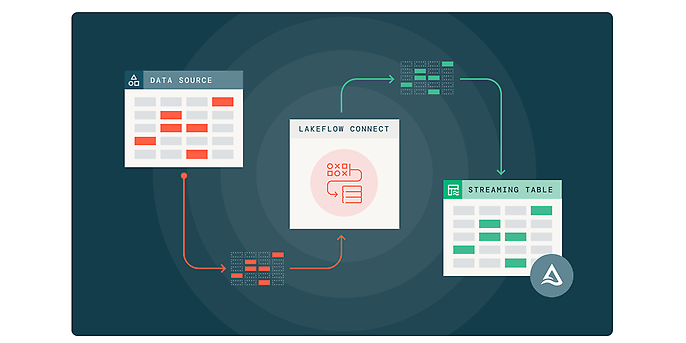

Lakeflow Connect relies on a gateway architecture for this purpose. The data is first extracted and buffered in a Unity Catalog volume (staging). Only then is it processed.

The advantage: transformations can be restarted as often as desired without burdening the productive ERP system with queries again. Functions such as change data capture (CDC) and automatic schema recognition are integrated as standard.

Source: Incremental reading and writing in LakeFlow Connect LakeFlow Connect | Databricks

Event processing without overhead: Zerobus

Until now, processing real-time data (IoT, clickstreams) often required the operation of a separate Kafka cluster.

Zerobus Ingest now offers a native, serverless alternative. Clients write data records directly to Delta Tables via a gRPC connection. This eliminates the administrative overhead of a complex message queue infrastructure for pure ingestion scenarios.

- Performance: With optimised configuration, data is available for queries in less than a second.

- Differentiation from Kafka: Zerobus is ideal for direct ingestion. For scenarios with complex stream processing logic prior to ingestion or geo-replication across data centres, Kafka or Flink remain the preferred technologies.

Source: Zerobus Ingest Announcing the Public Preview of Zerobus Ingest | Databricks Blog

From ‘how’ to ‘what’: Declarative ETL

Traditional Spark jobs or Airflow DAGs often consist largely of infrastructure code (checkpointing, partitioning, merge logic).

Lakeflow Pipelines (Lakeflow Spark Declarative Pipelines | Databricks on AWS) and the further development of Delta Live Tables rely on a declarative paradigm. The engineer defines the target state, not the process path: ‘Create a streaming table based on source X, clean up duplicates and historise changes.’

- Serverless operations: The engine autonomously decides on incremental updates or full refreshes. Maintenance jobs such as OPTIMIZE or VACUUM run abstractly in the background.

- Native historisation (Auto CDC): Slowly Changing Dimensions (SCD Type 2) are implemented via standardised APIs – either via AUTO CDC INTO in SQL or create_auto_cdc_flow in PySpark. The engine automatically handles updates, inserts and deletes, including correct sequencing.

- Integrated quality assurance: Validation rules are defined directly on the data set using expectations. If data violates these rules (e.g. negative sales values), it can be automatically isolated and moved to quarantine tables without stopping the process.

Native orchestration: Lakeflow jobs

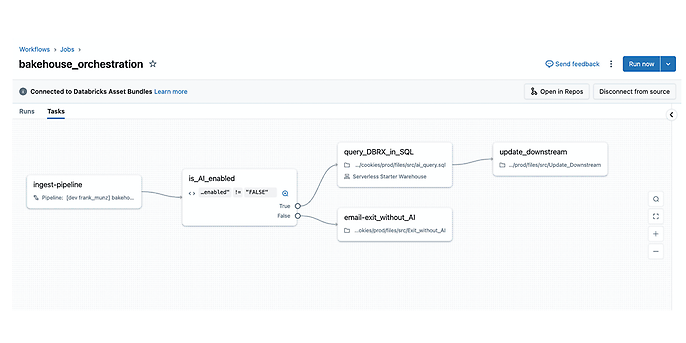

Control is handled by Lakeflow jobs (Lakeflow Jobs | Databricks on AWS). These now natively cover the majority of complex orchestration requirements, often making the use of external tools (such as a separate Airflow server) obsolete:

- Control flow: Conditional executions (if/else logic) can be mapped directly in the job graph.

- Task values: Dynamic parameter exchange between tasks enables complex dependencies without external databases.

- Event-driven: Jobs can be started based on events (such as file arrival in the object store) instead of running only on a time basis.

Source: Lakeflow Jobs: Naturally managed orchestration for any workload Lakeflow Jobs | Databricks

Lakebase: Convergence of OLTP and OLAP

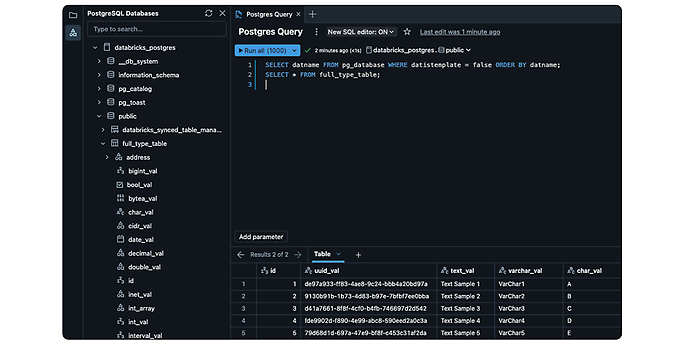

One of the most significant architectural innovations concerns the database level. Previously, there was a strict separation: Databricks for analytics (OLAP) and external relational databases (such as Postgres) for operational applications. This inevitably led to data silos and the need for synchronisation.

Source: Lakebase: Postgres for data apps and AI agents Lakebase | Databricks

Zero-ETL architecture

Lakebase (Lakebase | Databricks) eliminates this separation. It is a fully managed, PostgreSQL-compatible database within the platform. Developers use it for operational workloads with the usual low latency.

The architectural highlight: Lakebase replicates transaction logs directly into the Delta format via an internal mechanism.

The result: a zero-ETL workflow in which operational data is available in real time for analysis in the lakehouse without manual pipelines.

Database branching: CI/CD for data

For DevOps teams, Lakebase offers an essential quality assurance feature with database branching. While code branching is trivial, cloning databases has been resource-intensive until now.

Lakebase uses copy-on-write technology: a complete clone of the production database can be created in a fraction of a second. Since the branch and the original share the physical data blocks until changes are made, there are hardly any storage costs.

Use cases:

- Low-risk testing of schema migrations on real data.

- Isolated environments for developing and testing AI agents.

Unity Catalog: Consistent governance

The distinguishing feature from a heterogeneous tool landscape is the central bracket: the Unity Catalog. Regardless of whether data flows in via Zerobus, is extracted via Lakeflow Connect or is created in Lakebase – all metadata is stored centrally.

This enables seamless data lineage throughout the entire data lifecycle. Governance thus becomes an integral part of the architecture and is not just added on afterwards.

Conclusion: Consolidation as a driver of efficiency

The introduction of Lakeflow and Lakebase marks a paradigm shift from fragmented individual tools to an integrated platform.

Data engineers are relieved of the burden of maintaining fragile interfaces and can focus on developing robust data products. In addition to technical stability, this consolidation also offers considerable economic advantages. In the next part, we will examine the monetary impact of this modernisation and how legacy systems can be replaced.

Systematic architecture modernisation

We support you in introducing Lakeflow, Zerobus and Lakebase. From evaluating the current architecture to implementing scalable platforms, we accompany you throughout your modernisation process.